In today’s digital world, massive amounts of data are generated every second from social media, mobile apps, IoT devices, banking systems, and digital marketing platforms (like ads, websites, and campaigns). To make sense of this data and turn it into valuable insights, two key domains are used: Big Data Analytics and Data Engineering.

What is Big Data?

Big Data refers to extremely large, complex, and continuously growing datasets that cannot be efficiently handled using traditional data processing tools. These datasets come from multiple sources and require advanced technologies to store, process, and analyze them.

Types of Big Data

- Structured Data

- Organized and stored in tables (rows & columns)

- Easy to analyze

- Example: Banking records, spreadsheets

- Unstructured Data

- No fixed format

- Difficult to process

- Example: Videos, images, social media posts

- Semi-Structured Data

- Partially organized

- Example: JSON, XML files, emails

The 3Vs of Big Data:

- Volume – Huge amounts of data (terabytes to petabytes)

- Velocity – Data generated at high speed (real-time or near real-time)

- Variety – Different types of data (structured, semi-structured, unstructured)

👉 Examples: Social media posts, online transactions, sensor data, videos

What is Big Data Analytics?

Big Data Analytics is the process of examining large datasets to uncover hidden patterns, correlations, trends, and insights that help in decision-making.

Types of Analytics:

- Descriptive Analytics – What happened?

- Predictive Analytics – What might happen?

- Prescriptive Analytics – What should be done?

Popular Tools:

- Apache Hadoop – Distributed storage and processing

- Apache Spark – Fast, in-memory data processing

- Tableau – Interactive dashboards

- Power BI – Business intelligence and reporting

What is Data Engineering?

Data Engineering is the practice of designing, building, and maintaining systems that collect, process, and store data so it can be used for analysis, reporting, and decision-making.

👉 In simple terms, Data Engineering prepares the data before it is used by analysts or data scientists.

Key Responsibilities:

- Designing data pipelines

- Performing ETL (Extract, Transform, Load) processes

- Building data warehouses and data lakes

- Managing real-time data streaming systems

Common Tools:

- Apache Kafka – Real-time data streaming

- Apache Airflow – Pipeline scheduling and automation

- Snowflake – Cloud-based data storage

- Google BigQuery – Scalable analytics database

Big Data Analytics vs Data Engineering

| Feature | Big Data Analytics | Data Engineering |

|---|---|---|

| Main Goal | Extract insights | Prepare and manage data |

| Focus Area | Analysis & visualization | Data pipelines & infrastructure |

| Skills Needed | Statistics, ML, visualization | Programming, databases, ETL |

| Output | Reports, dashboards | Clean, structured datasets |

👉 In simple terms:

- Data Engineering = Foundation (data preparation)

- Big Data Analytics = Insights (decision-making)

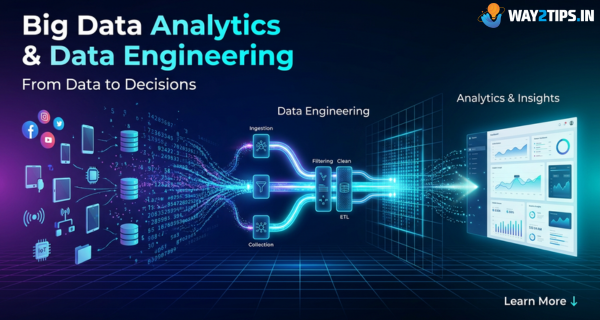

How a Data Pipeline Works

- Data Sources – Applications, APIs, sensors, databases

- Data Ingestion – Collecting data (e.g., Kafka)

- Data Processing – Cleaning and transforming (e.g., Spark)

- Data Storage – Data warehouses or data lakes

- Data Analysis – Visualization and reporting

Real-World Use Cases

- E-commerce – Product recommendations (Amazon, Flipkart)

- Banking – Fraud detection and risk analysis

- Healthcare – Patient data analysis and prediction

- Marketing – Customer behavior insights

- Smart Cities – Traffic and energy optimization

Challenges

- Ensuring data security and privacy

- Managing high infrastructure costs

- Handling poor data quality

- Need for skilled professionals

Conclusion

Big Data Analytics and Data Engineering are not just technical fields—they are the driving force behind modern digital transformation. Organizations today rely heavily on data to understand customer behavior, improve operations, and gain a competitive advantage. However, raw data alone is not useful unless it is properly processed and analyzed.

This is where Data Engineering plays a critical role. It ensures that data is collected from multiple sources, cleaned, transformed, and stored efficiently. Without a strong data foundation, even the most advanced analytics tools cannot deliver accurate results.